How I coped with a Twitter plugin too close to malware

On Friday the 16th of March I was sent a few tweets from friends who found that Google's Chrome browser warned them against visiting

I couldn't recreate the warning. I couldn't find any sign of malware or of any hacking. A few hours later everyone had confirmed that the warning had been dropped.

On Monday the 19th - the start of a week of business travel for me - the warning came back. For everyone. Google's search results warned people about malware and blocked clicks to the site. The timing wasn't great.

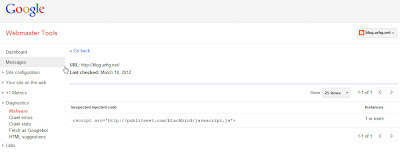

By Tuesday the 20th I found some time to get online again and investigate. Google Webmaster Console was so useful. Thanks Google. The console put a warning about malware on the screen for me to notice and sent me an email alert. The same console listed the pages it was worried about and actually highlighted the worrying code.

The problem was the Publitweet Twitter plugin once covered by TechCrunch. Google was worried about the plugin's home domain and since it had a JavaScript call back to the domain Google was worried that any site that used it might also be a malware risk. The good news was that no malware had actually been detected on my blog.

It took a few seconds to fix the problem. I just deleted the suspect code.

Then I hit the "Request Review" button provided by Google alongside the malware warning.

In hindsight, that may have been a mistake. I waited.

I put together a Google+ post to warn other webmasters about Publitweet and in I wondered how long the re-scan would take.

Over the next couple of days Google's Webmaster Console warned me that traffic to key pages of the site had dropped suspiciously. It was obvious why.

Google stopped crawling the site. Right up until I had requested the review Google had been checking the site every day. The list of warnings about suspect URLs had previously had a few entries - but it stopped with just two URLs Google had looked at on the 20th.

I tried to encourage Google back to the site by doing a manual "Fetch as Googlebot" request on the URLs. The fetch worked. The URLs were re-submitted back to the index. Nothing happened on the Malware warning front.

Yesterday, after about my 100th tweet to say my site had malware, I started a Google+ thread limited to just a few people - helpful Google engineers, malware experts, etc - and shared the problem. Within a few minutes I got my first reply - from someone at Google who usually goes out of their way to help. I won't name drop - but thanks.

After a few more comments from other helpful souls the consensus was the same. I might have hit that "request review" button too quickly. There was concern that the server might have still held on to a cached copy of the risky code. I was hoping this wouldn't be the case since the blog is part of blogger.com. Google owns that. Surely they can cope with their own cache?

One engineer suggested that malware review requests can be turned around in a day; but 2 to 3 days is about right. I resolved to re-request the review and sit tight for at least 3 days.

That's a type of conversation I couldn't have had on Twitter.

By the end of the day, less than a handful of hours later, Chrome stopped warning against visiting the site and an hour after that the Google SERPs block was lifted.

The takeaways:

blog.arhg.net. It was worried about malware.I couldn't recreate the warning. I couldn't find any sign of malware or of any hacking. A few hours later everyone had confirmed that the warning had been dropped.

On Monday the 19th - the start of a week of business travel for me - the warning came back. For everyone. Google's search results warned people about malware and blocked clicks to the site. The timing wasn't great.

By Tuesday the 20th I found some time to get online again and investigate. Google Webmaster Console was so useful. Thanks Google. The console put a warning about malware on the screen for me to notice and sent me an email alert. The same console listed the pages it was worried about and actually highlighted the worrying code.

The problem was the Publitweet Twitter plugin once covered by TechCrunch. Google was worried about the plugin's home domain and since it had a JavaScript call back to the domain Google was worried that any site that used it might also be a malware risk. The good news was that no malware had actually been detected on my blog.

It took a few seconds to fix the problem. I just deleted the suspect code.

Then I hit the "Request Review" button provided by Google alongside the malware warning.

In hindsight, that may have been a mistake. I waited.

I put together a Google+ post to warn other webmasters about Publitweet and in I wondered how long the re-scan would take.

Over the next couple of days Google's Webmaster Console warned me that traffic to key pages of the site had dropped suspiciously. It was obvious why.

Google stopped crawling the site. Right up until I had requested the review Google had been checking the site every day. The list of warnings about suspect URLs had previously had a few entries - but it stopped with just two URLs Google had looked at on the 20th.

I tried to encourage Google back to the site by doing a manual "Fetch as Googlebot" request on the URLs. The fetch worked. The URLs were re-submitted back to the index. Nothing happened on the Malware warning front.

Yesterday, after about my 100th tweet to say my site had malware, I started a Google+ thread limited to just a few people - helpful Google engineers, malware experts, etc - and shared the problem. Within a few minutes I got my first reply - from someone at Google who usually goes out of their way to help. I won't name drop - but thanks.

After a few more comments from other helpful souls the consensus was the same. I might have hit that "request review" button too quickly. There was concern that the server might have still held on to a cached copy of the risky code. I was hoping this wouldn't be the case since the blog is part of blogger.com. Google owns that. Surely they can cope with their own cache?

One engineer suggested that malware review requests can be turned around in a day; but 2 to 3 days is about right. I resolved to re-request the review and sit tight for at least 3 days.

That's a type of conversation I couldn't have had on Twitter.

By the end of the day, less than a handful of hours later, Chrome stopped warning against visiting the site and an hour after that the Google SERPs block was lifted.

The takeaways:

- There's always a risk in adding a plugin

- Always sign up to Google's Webmaster Console

- Beware caches - perhaps wait a while before asking for a malware review

- Malware reviews shouldn't take much more than four days